SCCM 2012: Get Locked Apps/Packages

$query = "SELECT SEDO_LockState.LockStateID, SEDO_LockState.AssignedUser, SEDO_LockState.AssignmentTime, SEDO_LockState.AssignedUser, SEDO_LockState.AssignedMachine, v_SmsPackage.Name, fn_ListApplicationCIs.DisplayName, fn_ListApplicationCIs.Manufacturer FROM SEDO_LockState INNER JOIN SEDO_LockableObjects ON SEDO_LockState.LockID = SEDO_LockableObjects.LockID INNER JOIN SEDO_LockableObjectComponents ON (SEDO_LockableObjects.ObjectID = SEDO_LockableObjectComponents.ObjectID) LEFT OUTER JOIN v_SmsPackage ON SEDO_LockableObjectComponents.ComponentID = v_SmsPackage.SEDOComponentID LEFT OUTER JOIN CI_ConfigurationItems ON SEDO_LockableObjectComponents.ComponentID = CI_ConfigurationItems.SEDOComponentID LEFT OUTER JOIN fn_ListApplicationCIs(1033) ON CI_ConfigurationItems.CI_UniqueID = fn_ListApplicationCIs.CI_UniqueID WHERE (SEDO_LockState.LockStateID <> 0)" $server = "" $db = "" $constring = "Server=$server;Database=$db;Integrated Security=True" $connection = New-Object System.Data.SqlClient.SqlConnection $connection.ConnectionString = $constring $connection.Open() $command = $connection.CreateCommand() $command.CommandText = $query $result = $command.ExecuteReader() $table = New-Object System.Data.DataTable $table.Load($result) $connection.Close() $table.Rows | Format-Table

PowerShell: Run via SCCM with Administrative rights.

If you have tried to run a PowerShell script before with SCCM you might have found it odd and not exactly intuitive. Here are a couple tips.

The most frustrating part of this problem is simply...not being able to tell what is wrong. The error message comes and goes before you have a chance of seeing it.

It isn't neccessary to do this (as I've already done it), but to solve the problem I modified my SCCM command line as follows:

%COMSPEC% /K powershell.exe -noprofile -file script.ps1 -executionpolicy Bypass

When running this the first thing you will notice is an error from cmd.exe saying UNC paths are not supported, reverting back to the windows directory. Well now your script wont launch because there is no correct working directory (which WOULD have been the network share on your distribution point). To get around THIS you have to set the following:

Key: HKLM\Software\Wow6432Node\Microsoft\Command Processor

Value: DisableUNCCheck

Data (DWORD): 1

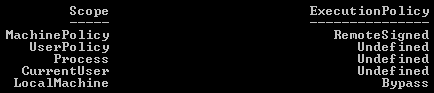

Now if you run your program again you will see it says the execution of scripts on the local machine has been disabled. Luckily you have a hand dandy command prompt (thanks to the /K switch) so you can type powershell -command "Get-ExecutionPolicy -list".

You will see that everything is Undefined. If you go open up a regular command prompt and type the same thing, you should see whatever your actual settings are (in my case it was Bypass set to the LocalMachine scope and everything else undefined, this was set to Bypass for TESTING reasons).

A whoami in the SCCM kicked command prompt shows nt authority\system as you would expect.

So the problem appears to be that when run as the system account -executionpolicy is ignored and it doesn't appear to be getting/setting it's execution policy in the same place everything else is.

For instance, right now on the same machine I have two windows open, one powershell run as administrator (via a domain account in the local admins group), the other via the command prompt SCCM launches. Here are the Get-ExecutionPolicy -list results from each:

Local Admin:

SCCM

Same machine, two different settings. First attempt was to use:

powershell.exe -noprofile -command "Set-ExecutionPolicy Bypass LocalMachine" -File script.ps1

This failed and ultimately it appears that powershell will either run -command or -file, but not both.

So the solution to running PowerShell scripts as admin via SCCM is to do the following:

Create an SCCM Program with the following command line:

powershell.exe -noprofile -command "Set-ExecutionPolicy Bypass LocalMachine"

Then one with the following:

powershell.exe -noprofile -file script.ps1

And finally a cleanup program:

powershell.exe -noprofile -command "Set-ExecutionPolicy RemoteSigned LocalMachine"

Obviously RemoteSigned should be whatever your organization has decided as the standard Execution Policy level, the default I believe is Restricted, most will probably use RemoteSigned, security (over)conscious will probably use AllSigned.

Is this ideal? No. Of course not. It paying attention to the -ExecutionPolicy switch would be ideal.

But it works.

And on a bit of a side tangent, I think it is a pretty "microsoft" view of security to put this rather convoluted security system in place, and then still have VBScripts executable right out the gate on Windows 7 box. And batch files.

What is the point of all this obnoxious security when, at the end of the day...they can just use VBScript. Had they made you toggle a setting somewhere to enable VBScript and Batch files, had they not made 5 scopes of security policy for powershell, had they basically not admitted that they don't know how to do a secure scripting language (your security should probably come from the user rights, and not purely the execution engine, though, that's a finer point you could argue several ways), I'd probably be a lot more sympathetic.

Had SCCM not been such a myopic monolithic dinosaur, this wouldn't be a problem. These are all symptoms of what will eventually kill them. Legacy. 64-bit filesystem redirection, the registry as a whole, more specifically Wow6432Node, Program Files (x86), needing to use PowerShell, VBScript or Batch files to do a simple file transfer/shortcut placement.

This is System Center Configuration Manager. It can use bits to copy a multi-gigabyte install "safely" to a client machine...but only if it's then going to run an installer.

It can't copy a file and place a shortcut, or add a key to the registry, it only manages the configuration in the most outmoded and obsolete ways possible.

/rant

Few quick notes. You see how MachinePolicy is set? That WILL override your SCCM packages, that means GPO is controlling the ExecutionPolicy, so while your command will take effect, it will be overruled.

Second note is the reason the two are different is because the SCCM version is using the x86 settings, not x64. Which, while it explains the difference, does not explain why running it with -ExecutionPolicy Bypass is ignored, nor why running a script as a user in SCCM works fine, but as an admin, it does not. End result being, you still need the workaround.

App-V: Clearing the cache...the hard way.

Ever cleared an application only to find it didn't delete everything? Clearing something from ProgramData is no big deal as this can be done fairly easily by anything running as the system account (SCCM for example). Clearing a users profile is another story.

If you have used a command prompt much at all then 'rmdir' is nothing new to you. What you may not realize is that rmdir isn't an executable, it's a command. So in this case calling "rmdir" in an SCCM package will net an unusable package. The workaround is thus:

%COMSPEC% /c rmdir /q /s "%USERPROFILE%\AppData\Local\SoftGrid Client\<FolderName>"

Put this in SCCM, set it to run as user, and it should clear the folder just fine.

In this case, to clear an app I used something along the same lines as what I use to clear office.

sftmime.exe CLEAR APP:"<AppName>" /LOG "C:\InstallLogs\Clear.log"Run %COMSPEC% package.sftmime.exe DELETE PACKAGE:"{<PackageName}" /LOG "C:\InstallLogs\Delete.log"sftmime.exe REFRESH SERVER:<ServerName> /LOG "C:\InstallLogs\Refresh.log"

Set the programs each to Start In: C:\Program Files (x86)\Microsoft Application Virtualization Client

Clear app can be done as admin or user, user gives the best chance of it clearing the folder you are trying to delete, but if the user doesn't have clear permissions this will fail (default permissions should be to allow a Clear if I recall).

Deleting the package is again a case of rights, most likely you will want to run this as admin so it deletes it globally and you are assured permissions to do so.

Refresh should be run as the user so the USER contacts the publishing server to retrieve their software.

In this case the reason for the refresh is because an application was updated and the update pulled down the new OSD but failed to pull down the updated SFT, which left it in a non-functional state. I still want the user to have the application and not need them to log off so I clear it, delete the folder, delete the app, then refresh the publishing server so they get their app back seamlessly.

Thanks to Justin for the tweak to make this work with SCCM.